Featured Products

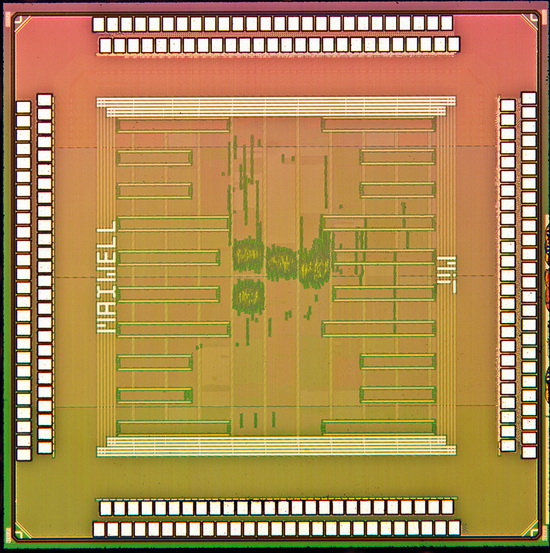

Researchers at the Massachusetts Institute of Technology have developed a new chipset for image sensors, which will reshape smartphone photography.

A few hours ago, Aptina has revealed two new image sensors for mobile devices. The sensors show that the so-called megapixel race is still on, even if HTC revealed its “Ultrapixel” technology in the One smartphone and said that too many megapixels carry a “load of crap”.

Aptina’s new 12 and 13-megapixel image sensors will become available in smartphones and tablets by the end of 2013. The company promises 4k ultra HD video recording and “impressive” performance in low-light conditions.

MIT’s new chipset will reshape mobile photography in low-light conditions

However, the new chip developed by MIT’s researchers is said to revolutionize smartphone photography. The process is based on a new technique that will transform average-looking photos into professional-looking images.

This action will not require too much action from the users, who will only have to press a button to modify their images. The processor of the image sensor can handle HDR photography with ease and quickness, while consuming very little power.

Taking many photos eats a lot of battery life, but the new chipset will preserve power, while performing multiple tasks, said lead author Rahul Rithe. The fast HDR processing will be very effective in low-light mobile photography, added Rithe.

MIT’s new chip for image sensors, capable of taking professional-looking images on smartphones, revealed.

The image sensor takes two photos at once: one with flash, one without

Upcoming image sensors based on this technology will solve the biggest problem of low-light photography: photos without flash are too dark to be useful, while photos with flash are overexposed and affected by the harsh lighting.

MIT’s image sensor captures two images, one without flash and one with flash. The technology splits the photos to their base layers, then it merges the “natural ambiance” from the photo without flash and the “details” from the one with flash, with impressive results.

New noise reduction technique

The system can also reduce noise, thanks to a special “bilateral filter”. According to Rithe, this filter will blur only the neighboring pixels with matching brightness.

If the brightness levels are different, then the system will not blur the pixels because it will consider that they are part of the frame. Objects in the frame are expected to have different brightness levels, while objects in the background have the same brightness levels.

MIT’s new chipset will have to handle several processes at once. However, it can perform the tasks with ease, thanks to a storing data technique called “bilateral grid”.

This technology splits the image into small blocks and assigns a histogram to each block. The bilateral filter will know when to stop “blurring across edges” because the pixels are separated in the bilateral grid.

Working prototype available, but not ready for prime time

The researchers have already built a working prototype, courtesy of Taiwan Semiconductor Manufacturing Company, the world’s largest independent semiconductor company. The project was funded by Foxconn, one of the biggest electronics manufacturer in the world, which produces devices for Sony, Apple, and many others.

The image sensor is based on 40-nanometer CMOS technology and is currently under heavy testing. Researchers at MIT did not announce when the image sensors powered by this chipset will become available on the market.